The easiest way to fail in 2026 is building a thin generative AI wrapper. The hardest, but most lucrative path, is building an AI-native product where the foundation model is merely a commodity layer beneath your proprietary workflow engine.

The Death of the "Chat PDF" Clone

Two years ago, launching a tool that summarized PDFs using the OpenAI API could land you in YCombinator. Today, it lands you in obscurity. The barrier to entry for building thin wrappers around large language models (LLMs) has approached zero. Apple, Google, and Microsoft are building these features directly into their operating systems. If your entire product value can be replicated by a single prompt in ChatGPT, you do not have a company.

Defining the AI-Native Architecture

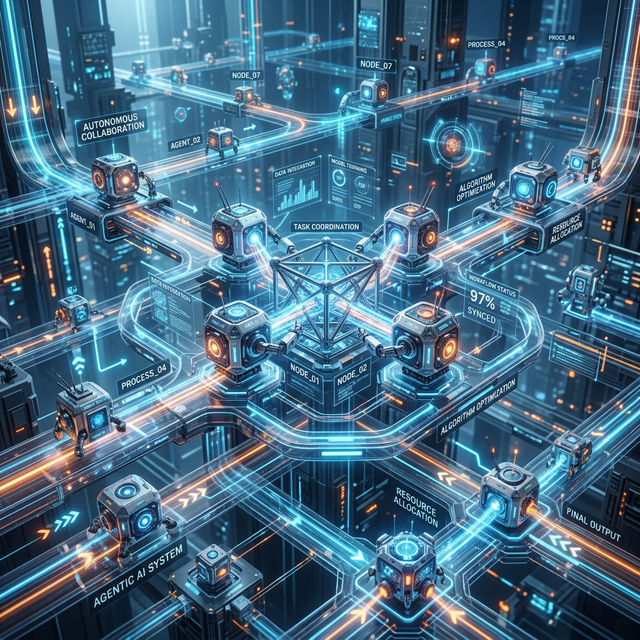

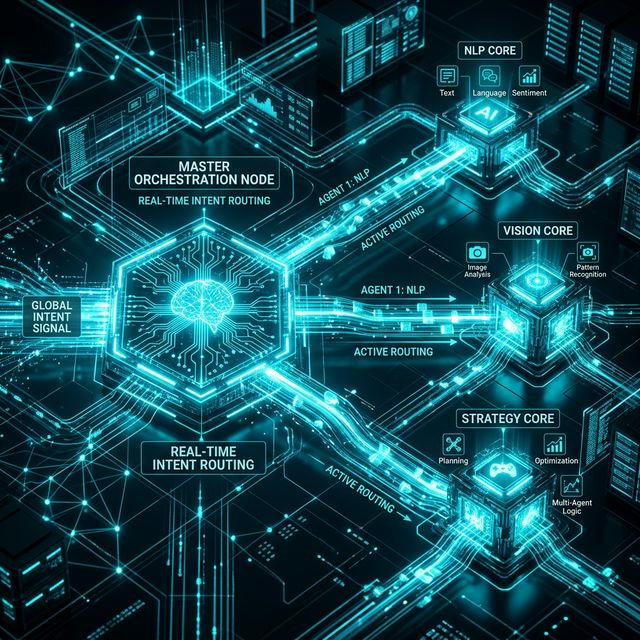

An AI-native product assumes generative AI exists at the core of the user experience, rather than bolted on as a feature. The architecture relies on three pillars:

- Continuous Context Loops: The system remembers every user action, learns from corrections, and proactively suggests the next step. It doesn't wait for a prompt; it observes the workflow.

- Proprietary Embeddings: While you might use a commodity LLM for generation, the retrieval-augmented generation (RAG) pipeline relies on your company's proprietary vector database of unique industry data.

- Multi-Agent Orchestration: Complex tasks are broken down and routed to specialized agents acting in concert, rather than relying on a single mega-prompt.

How to Build a Defensible Moat in SaaS

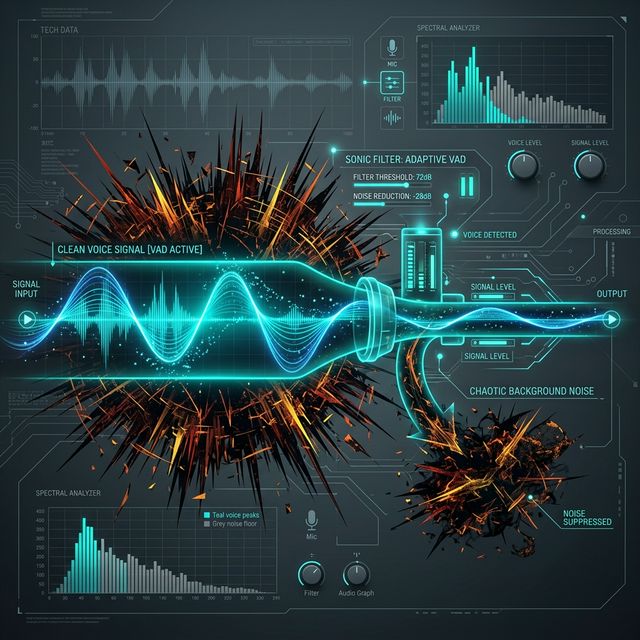

To survive, AI SaaS founders must build structural moats. The most effective moat in 2026 is workflow integration. If your AI tool automatically extracts data, categorizes it, and directly updates the user's Salesforce, Jira, and Slack channels in one step, it becomes indispensable. The value is not the text generation—it is the heavy lifting of the integration layer doing the work the human used to do.

The Hardware Reality is Catching Up

We are seeing founders shift toward "Edge AI" — running smaller, highly specialized local models on the user's device. This drastically reduces API costs for the startup and completely solves data privacy concerns for enterprise clients. If you are building B2B AI SaaS, offering local inference is a massive competitive advantage.

Build an AI-Native Product, Not a Wrapper

We architect defensible AI systems blending powerful LLMs with custom vector databases and robust workflow integrations. Turn your AI idea into an un-copyable business.

Talk to our Architecture Team