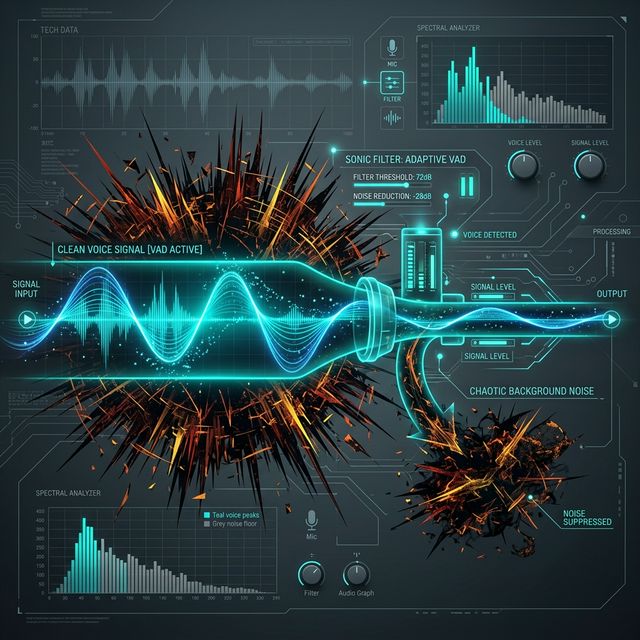

Every developer focuses on the shiny new LLM or the hyper-realistic TTS voice. But the actual difference between a robotic chatbot and a fluid conversational agent lies in a tiny, highly-optimized neural network called Silero VAD.

What is VAD?

Voice Activity Detection answers a single, rapid-fire question thousands of times every second: "Is a human speaking right now?" It ignores dogs barking, keyboards clacking, and wind noise. It only triggers when actual speech occurs.

The Interruption Problem

Imagine your AI agent is giving a long answer. The human user says, "Wait, stop, I actually meant..."

If you don't have robust VAD, the AI will just keep talking over the human. A proper VAD integration instantly detects the human interruption, cuts off the AI's ongoing audio stream, cancels the current LLM generation task, and starts recording the new context. This makes the interaction feel like a real dialogue.

Silero VAD: The Industry Standard

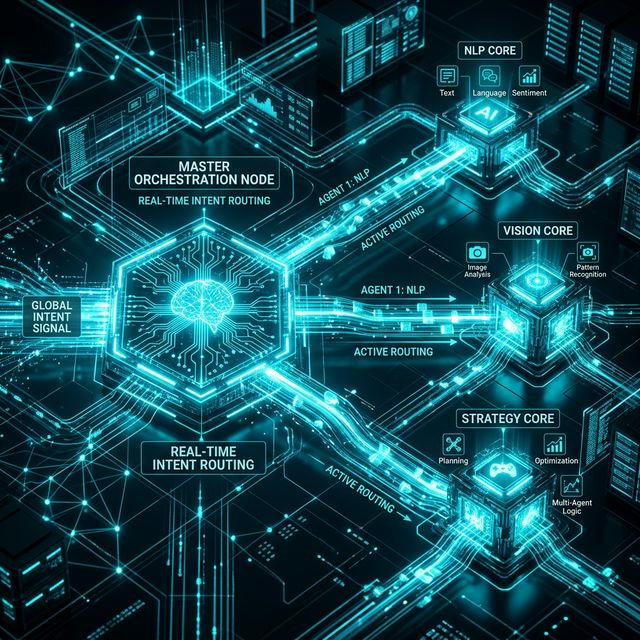

While many STT providers include VAD, relying on cloud STT for VAD introduces too much latency. Silero is a highly optimized, open-source VAD model that runs locally on the orchestration server (or even directly in the user's browser via WebAssembly). Because it runs locally, it can detect human speech in single-digit milliseconds, allowing the agent to react instantly to interruptions.

Master Edge AI and WebAssembly

We implement local, edge-based AI models to handle latency-critical tasks like VAD before the data ever reaches the cloud.

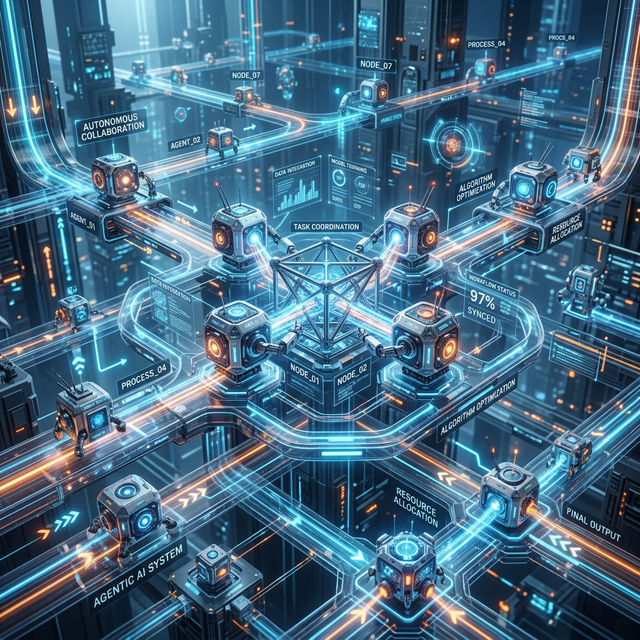

Upgrade Your Architecture