If your AI agents can only read text, they are operating blind. True agentic operations require understanding the visual and auditory context of the enterprise. In 2026, text-only orchestration is dead.

The Era of Blind AI: The Problem with Text-Only RAG

Retrieval-Augmented Generation (RAG) solved the most glaring issue with Large Language Models: hallucination. By grounding the LLM in a vector database containing your enterprise documentation, you ensured the model only answered based on approved, factual data. However, the first generation of RAG pipelines created a completely new problem: severe contextual blindness.

Consider a standard B2B SaaS customer support workflow. A customer experiences a critical failure in your software's billing UI. They don't write a five-paragraph essay detailing the CSS misalignment and the disabled submit button. Instead, they hit Command+Shift+4, take a screenshot of the broken interface, attach it to a Zendesk ticket, and write a two-word message: "It's broken."

When this ticket enters a standard text-only RAG pipeline, the embedding model aggressively attempts to run Optical Character Recognition (OCR) on the image. It extracts the raw text—perhaps extracting the word "Submit" and some generic navigation terms—and discards the rest. The visual placement, the fact that the button is greyed out (disabled), the red error toast notification in the corner, and the overall emotional context of the UI failure are completely destroyed in the translation to text.

When the AI attempts to perform a semantic search to find a solution, it searches only for the OCR'd text. It fails to match the vectors to the actual underlying problem because the most important data—the visual context—was never embedded. The agent cannot act effectively because it cannot "see."

Enter Gemini Embedding 2: The Unified Latent Space

Google has fundamentally shifted the engineering landscape with the release of Gemini Embedding 2. Unlike the previous generation of multimodal AI, which essentially bolted a vision encoder onto a language model (often resulting in latency bottlenecks and misalignment), Gemini Embedding 2 is natively multimodal from the ground up.

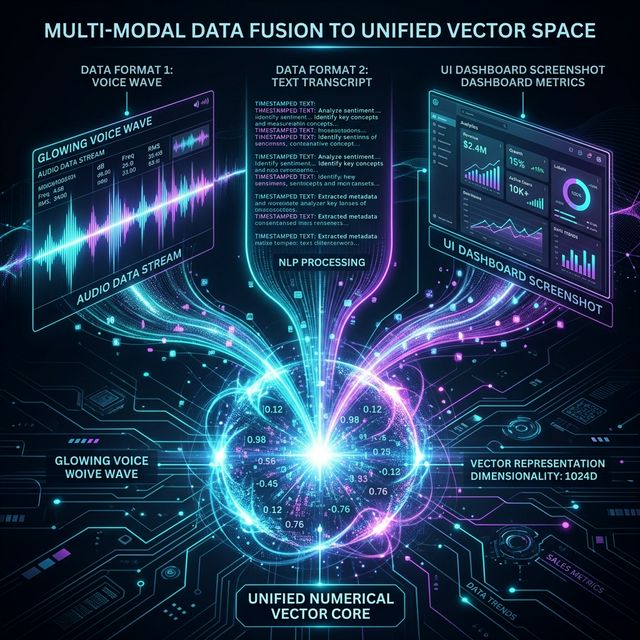

It maps images, video, audio, and text into a single, highly dense, shared high-dimensional vector space. To understand the magnitude of this technical achievement, you must understand latent spaces. In traditional NLP, the vector for the word "Dog" sits close to the vector for "Puppy." In Gemini Embedding 2's space, the text vector for the phrase "pressure valve failure" sits mathematically adjacent to the visual vector of a photograph showing a broken, leaking pipe, which in turn sits adjacent to the audio vector of an angry customer screaming about a flood on a VoIP call.

For CTOs, this means the fragmentation of data pipelines is over. You no longer need separate vector databases for your audio transcriptions (post-Whisper processing) and your CRM notes. By embedding everything into an engine like Pinecone, Qdrant, or pgvector using this single model, your autonomous agents can perform cross-modal semantic search instantly. The agent fetches the call recording, the UI screenshot, and the Jira ticket simultaneously because they all mathematically point to the exact same concept in the vector index.

Architectural Paradigm Shift: Building the Multimodal CRM layer

To implement this at scale, enterprise architecture must evolve. The traditional pipeline of [Source -> Text Extraction -> Embedding -> Vector DB] must be replaced with a [Source -> Multimodal Embedding -> Vector DB] flow.

The Ingestion Pipeline

When data hits your ingestion edge (e.g., an AWS Lambda function triggered by an S3 upload from Zendesk or Salesforce), you no longer split the logic by mime-type. You batch the raw files directly to the Gemini API.

// TypeScript Example: Multimodal Ingestion Pipeline

import { VertexAI } from '@google-cloud/vertexai';

import { Pinecone } from '@pinecone-database/pinecone';

const vertexAI = new VertexAI({ project: 'acme-corp-123', location: 'us-central1' });

const pc = new Pinecone({ apiKey: process.env.PINECONE_API_KEY });

async function ingestTicket(ticketId: string, textNote: string, audioBase64: string, imageBase64: string) {

const model = vertexAI.getGenerativeModel({

model: 'multimodalembedding@001'

});

// Gemini natively accepts arrays of varying modalities

const embeddingReq = {

content: {

parts: [

{ text: textNote },

{ inlineData: { mimeType: 'audio/mp3', data: audioBase64 } },

{ inlineData: { mimeType: 'image/png', data: imageBase64 } }

]

}

};

const response = await model.embedContent(embeddingReq);

const vector = response.embedding.values;

// Upsert the unified 768-dimensional vector into Pinecone

const index = pc.Index('enterprise-crm-multimodal');

await index.upsert([{

id: ticketId,

values: vector,

metadata: { source: 'zendesk', type: 'support_ticket' }

}]);

console.log(`Successfully embedded multi-modal ticket ${ticketId}`);

}Because the Gemini model handles the cross-modal alignment internally, the resulting vector represents the total synthesized intent of the interaction. Your application logic becomes dramatically simpler.

Use Case: The "What To Automate Next" Dashboard

Once your enterprise data is continuously mapped into this unified similarity layer, the potential for proactive operations (Proactive Ops) explodes. One of the most powerful implementations we have built for clients at Mansoori Technologies is the "What to Automate Next" dashboard.

Operations teams historically struggle to identify which internal processes are wasting the most time until employees reach a breaking point and complain. A "What to Automate Next" dashboard actively monitors the company's vector index by running unsupervised clustering algorithms (like DBSCAN or K-Means) over the incoming stream of multimodal embeddings.

If the algorithm detects a sudden, massive, highly dense cluster of vectors, it triggers an anomaly alert. For example, it might identify a tight cluster containing 400 screenshots of the exact same overly-complex AWS billing console, accompanied by frustrated audio recordings from Slack huddles, and text chats saying "Let me manually check the budget again."

The dashboard surfaces this cluster, labels it using an LLM summary ("Manual AWS Budget Auditing"), and calculates the estimated engineering hours lost based on the frequency of the vectors over the last 30 days. It doesn't wait for a manager to notice a drop in productivity; the vector math definitively proves the inefficiency exists. Your team can then instantly write a Python script to automate the budget check, saving thousands of hours. Proactive operations move organizations from fighting fires to preventing them.

Cost and Latency at Scale: Quantization Strategies

Of course, this immense power does not come without infrastructure challenges. Storing millions of highly dense, 768-dimension floats for every single interaction across a 500-person company is aggressively expensive if stored entirely in RAM for high-speed Approximate Nearest Neighbor (ANN) search.

Scalar and Binary Quantization

To scale proactive multimodal AI without going bankrupt, engineering teams must leverage Vector Quantization. Gemini Embedding 2's vectors perform exceptionally well under Scalar Quantization (reducing 32-bit floats to 8-bit integers) and even Binary Quantization (reducing floats to raw bits).

By implementing Binary Quantization via a database like Qdrant or Milvus, and utilizing Hamming distance for the retrieval calculation instead of Cosine Similarity, you can reduce your vector storage memory footprint by a staggering 95%. Our benchmarks show that reducing a 1 million vector dataset from full precision to binary results in only a 2-3% drop in retrieval accuracy, a highly acceptable tradeoff for operational and routing tasks.

Furthermore, an optimized infrastructure involves a tiered storage architecture. The hottest vectors—operations and tickets from the last 30 days—remain in quantized RAM. Historical vectors (recordings from last year) are pushed to object storage (S3) and searched via DiskANN or similar hybrid sparse-dense inverted file indices. This guarantees millisecond latency for live agent operations while safely archiving historical context.

The End of Whisper and CLIP Tapes

Prior to 2026, building what Gemini Embedding 2 offers required 'taping' together disparate open-source models. Engineering teams ran OpenAI's Whisper for audio, passed the text to `text-embedding-ada-002`, simultaneously ran OpenAI's CLIP model for image-text alignment, and desperately tried to average or concatenate the resulting vectors using custom aggregation layers.

This approach was an operational nightmare. The concatenation created massive, unwieldy vector sizes, and the lack of native alignment meant an image of a spreadsheet often didn't map accurately to the spoken numbers regarding that spreadsheet. Maintaining three separate model deployments, handling the varied latency of each API, and dealing with partial failures destroyed the velocity of small teams.

A natively multimodal model bypasses this completely. By routing audio, images, and text to a single endpoint, you eliminate three points of failure, radically reduce your cloud compute overhead, and gain semantic alignment that is mathematically impossible to achieve via concatenation.

Conclusion: Stop Building Text Pipelines

The transition from text-only vector search to multimodal vector search is not an iterative improvement; it is a hard shift in the foundational architecture of enterprise AI. As autonomous agents become the primary operators of our systems, their ability to 'see' the UI they are interacting with and 'hear' the frustration in a customer's voice is no longer optional.

If your engineering team is currently building an AI pipeline that relies strictly on text extraction, OCR, and transcriptions, they are building legacy software. The future of operations is proactive, the future of agents is autonomous, and the future of enterprise data is entirely, inescapably multimodal. It is time to upgrade your index.