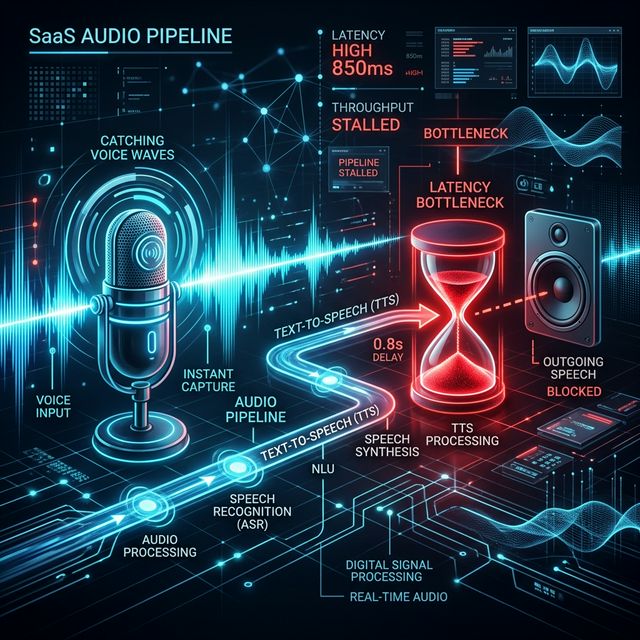

Human conversation happens at sub-300ms latency. If your Voice AI agent takes 1,000ms to respond, it feels broken. Fixing this latency usually requires a complete re-architecture of your TTS pipeline.

The Anatomy of Latency

When a human stops speaking to a Voice AI, a clock immediately starts ticking.

1. VAD must detect silence (~200ms).

2. STT finishes final transcription (~100ms).

3. LLM begins streaming text (~150ms for first token).

4. TTS synthesizes text to audio (~?).

TTS is the massive variable. Standard TTS APIs require full sentences before they can generate audio, ensuring your latency will always be terrible.

Streaming TTS to the Rescue

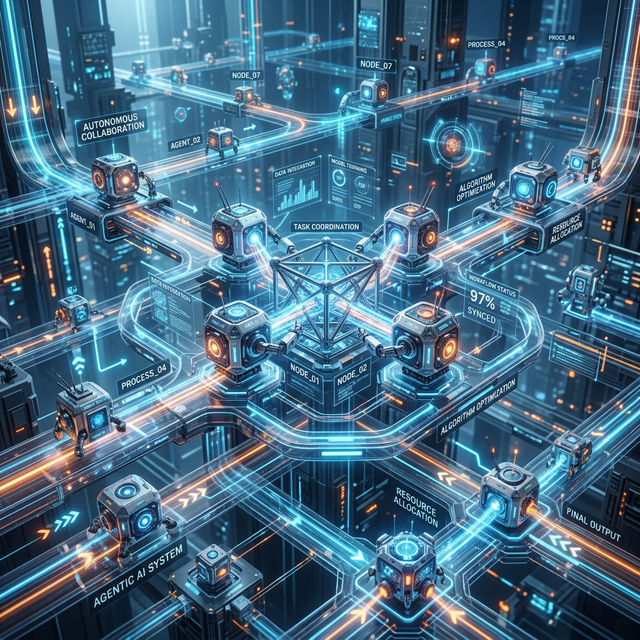

The only way to build conversational AI is to use Streaming TTS. Instead of waiting for full sentences, the LLM streams individual words or syllables to the TTS engine via WebSockets, and the TTS engine immediately streams audio bytes back to the orchestrator. This parallel processing is critical.

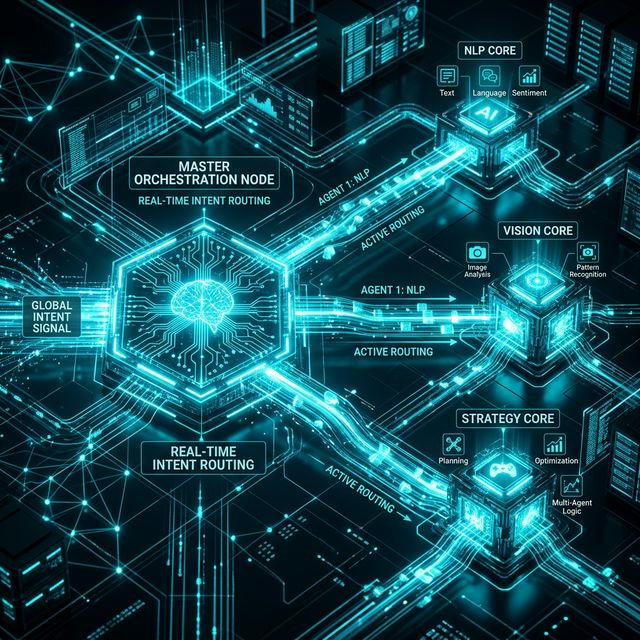

The Cost of Speed

Achieving this speed is expensive. While older, robotic-sounding models can be run locally for free, highly expressive AI voices from providers like Cartesia, ElevenLabs, or PlayHT require massive GPU compute to generate audio in real-time. This is why TTS often accounts for 60% of the total component cost of running a voice agent. You are paying for the privilege of skipping the line and getting immediate GPU access.

Crush Your AI Latency

We specialize in streaming architectures that achieve sub-500ms conversational latency.

Book an Architecture Review